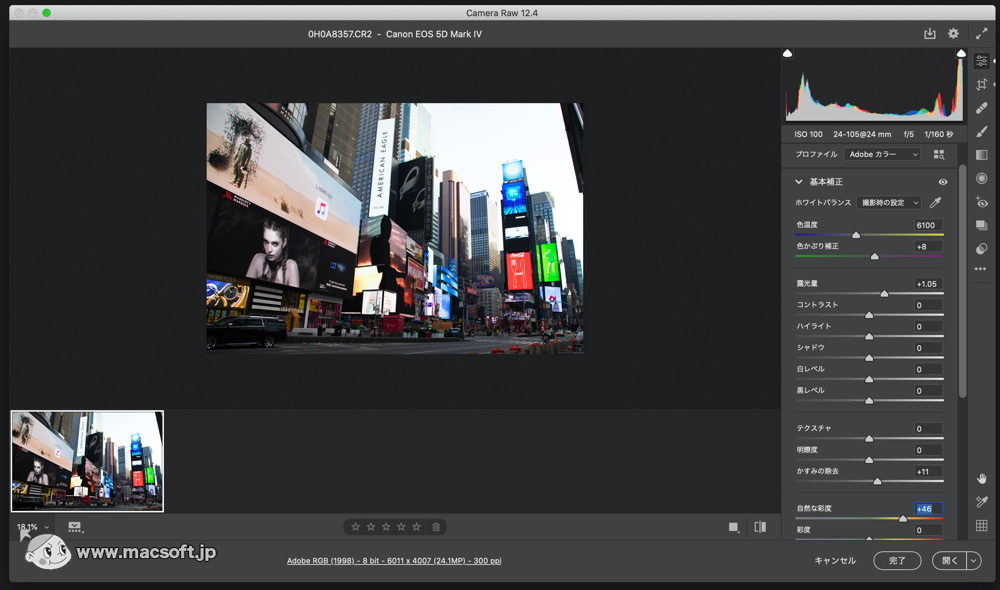

Note 1: it’s always difficult to compare upscaling methods because they often include different amounts of sharpening. So I decided to run some tests on Adobe’s Super Resolution and compare it to both Gigapixel AI and bicubic smoother, which I think is the best default in the LR/PS world. Topaz products have become quite popular to fix less than perfect image captures and I know many photographers use Topaz at least occaisonally.īecause I like to make large prints and I have a bit of OCD when it comes to pixel peeping both these products are of great interest to me (plus they are very cool uses of technology). The obvious intent of this update is to compete with programs like Topaz GigaPixel AI for upscaling. The difference with AI methods is that they can actually manufacture details and textures and not just scale what exists. In traditional upscaling methods, one basically stretches the image to cover more pixels and it works much like curve fitting in algebra (i.e. The main point of this update is to use AI and machine learning techniques to learn how to upscale an image to a higher resolution. I started typing this after post #2 and I haven't read the intervening posts yet.Adobe Super Resolution and Topaz Gigapixel AiĪdobe just released a new update to Camera Raw with a feature they call Super Resolution (this should be available in Lightroom soon). Incidentally, this is the approach used by Marc Levoy and his team in developing the Google Pixel series of smart phone cameras. Thus I would predict that the results will be excellent on many images, but not so good on others. We found that with our approach, the farther the data set was from our training set, the poorer the final prediction. I'm sure the newer methods are much more sophisticated than this, but I still wonder how the designers at Adobe set the "known" result in training the networks. The network is then assumed to be "trained" and can be used to predict an unknown output with a new set of data. This process is repeated by using some algorithm to adjust the weights until the final result is sufficiently close to the target. The final result is compared to a known, desired, result. The data is then processed through the layers where the weights are applied to the data. You present the first layer with a set of data from which you want to predict a final outcome. Each node is assigned a "weight", or multiplier, between 0 and 1. It works by constructing a network of nodes with several layers, each layer having progressively fewer nodes. I am no expert, but I did use a neural network (NN) technique in a research project several years ago so I know a little about it. Knowing that I can so easily quadruple the image resolution is quite comforting.Īs always, 'the proof is in the pudding', and I haven't tried it so take my comments with a large grain of salt.Īrtificial Intelligence (AI) and Machine Learning are being touted for almost everything these days, and it does produce some amazing results. (Focus stacking isn't an option because there would not be enough time for the flash to recycle or for the lens to acquire auto focus.) This requires that I crop considerably and end up with an image file that is far smaller than my typical files of all other types of photography that I do. I make those photos using a macro lens, so I position the lens far enough away from the subject to keep everything in focus. If I ever want to make a large print of a drop art photo, this will come in very handy.

Once the process is completed, ACR even reports that the resolution is four times greater than in the original image file.

Very surprisingly, the Enhance Preview window incorrectly indicates that using the process doubles the image resolution. I just now tried it on two different raw files - a NEF and a DNG - and the process worked fine.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed